The middle school version: You know how scientists run experiments to figure out what works? We do the same thing, but instead of test tubes, we use landing pages and sign-up forms. We try different "offers" — like a free trial vs. a demo vs. a guide — and Surface helps us see which one makes more people say "yes." It's like having a scoreboard for every experiment we run.

Why most teams can't run real funnel experiments

Everyone says they A/B test. Almost nobody actually does it well — at least not beyond the landing page.

Here's what "testing" looks like at most B2B companies: they change a headline on a landing page, run traffic for two weeks, and look at which version got more form submissions. That's not a funnel experiment. That's a page test. It tells you which headline got more clicks. It doesn't tell you which headline got more revenue.

Real funnel experiments test the full journey — from first click to closed deal. They test different offers (demo vs. free trial vs. consultation vs. audit), different qualification paths (strict vs. lenient), different routing strategies (AE vs. SDR), and different response approaches (instant email vs. text vs. phone call).

Most teams can't do this because the tools are fragmented. The landing page lives in one tool. The form lives in another. Routing lives in the CRM. Follow-up lives in a sales engagement platform. Measuring the impact of a change at the top of the funnel on conversion at the bottom requires stitching data across 4+ systems. Nobody does it because it's too hard.

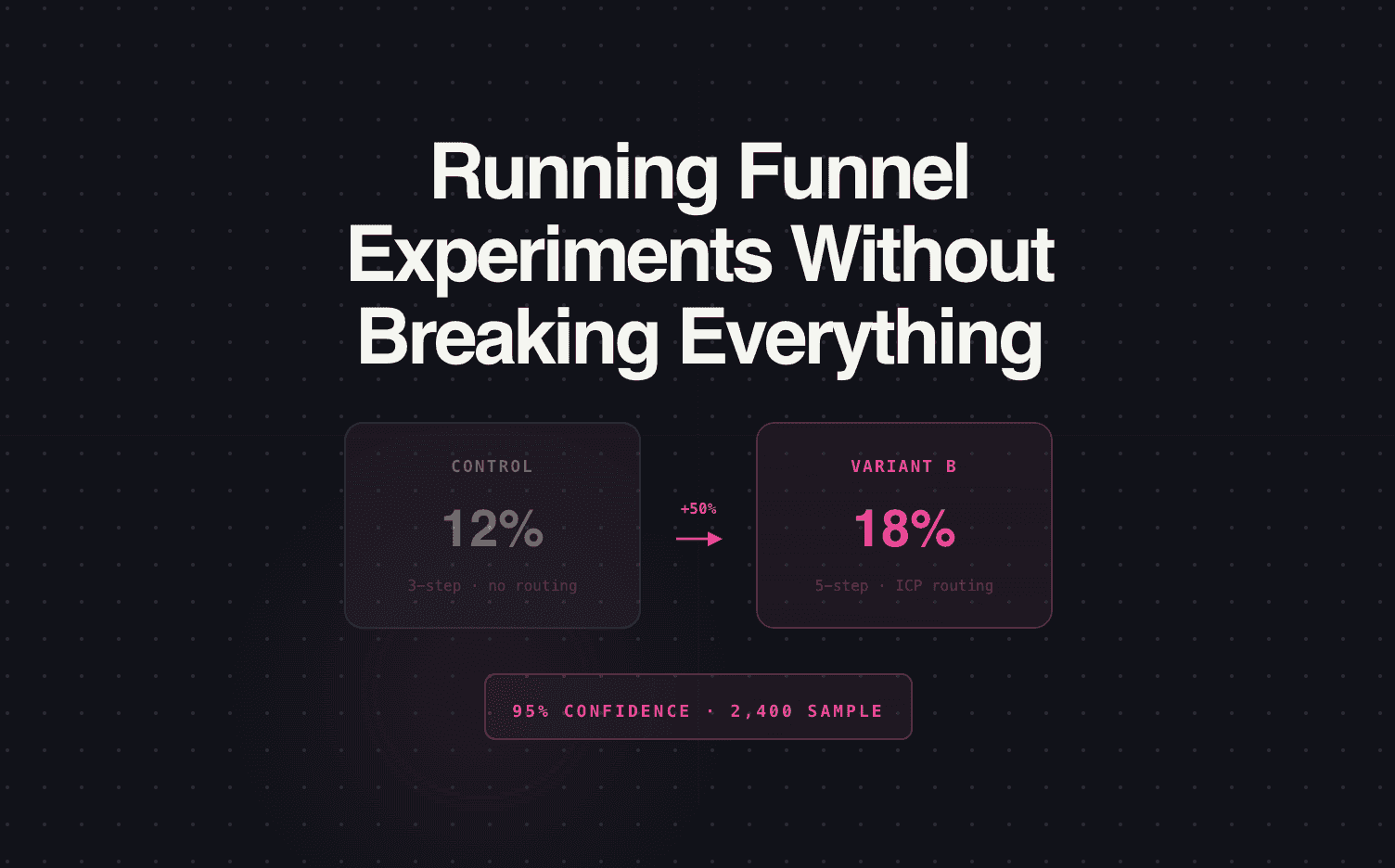

How we run experiments in Surface

Surface owns the full journey — form through meeting — which means we can test variables at any stage and measure the impact all the way through.

Here's what we tested last quarter:

| Experiment | What we changed | What we measured | Result |

|---|---|---|---|

| Offer test | Demo request vs. "Get a custom audit" vs. "See pricing" | Form-to-meeting rate | "Custom audit" converted 40% higher than demo request. "See pricing" had highest form completions but lowest meeting rate. |

| Qualification depth | 3-question form vs. 6-question form | Form completion rate AND lead-to-meeting rate | 6-question form had 18% fewer completions but 2.3x higher lead-to-meeting rate. Net meetings: 35% more with the longer form. |

| Response type | Instant email vs. instant text message vs. no auto-response (rep calls manually) | Speed-to-meeting-booked | Instant text got 2x the meeting booking rate vs. email. Manual rep call was fastest when the rep responded within 5 minutes — but average rep response was 47 minutes, killing the advantage. |

| Routing strategy | Round-robin vs. territory-based vs. "best available" (first rep to respond gets the lead) | Meeting show rate | Territory-based had the highest show rate (78%) because reps had relevant context. "Best available" had fastest booking but lowest show rate (61%) — speed without relevance doesn't hold. |

Each experiment ran on a specific percentage of traffic. Surface split the traffic at the form level — 50/50 or 70/30 depending on the test — and tracked every lead through to the outcome.

Our biggest surprises

The longer form won. Every CRO in the world will tell you "shorter forms convert better." They do — at the form level. But form conversion rate is a vanity metric. The 6-question form gave us fewer submissions but dramatically more meetings, because the leads who completed it were pre-qualified and the reps had context. We would never have discovered this without measuring through to the meeting, not just to the submission.

Text messages crushed email for instant response. We expected email to perform similarly to text. It didn't. Text felt personal and immediate. Email felt automated. The reply rate on instant text was 3x higher than email. This single insight changed our entire response strategy.

Speed without routing accuracy hurts. The "first to respond" model sounded great — fastest rep wins. But leads ended up with reps who had no context on their territory, industry, or product interest. They booked fast but showed up to meetings where the rep was unprepared. Territory-based routing was "slower" by 2 minutes but produced better outcomes because the conversation matched.

How to set up your first experiment

You don't need to test everything at once. Start with one variable:

The easiest experiment: Test two different offers on the same page. "Request a demo" vs. "Get a free consultation" vs. "See how it works for your industry." Measure form-to-meeting rate, not just form submissions. Run for 2–4 weeks with at least 100 submissions per variant.

If you can measure from form submit to booked meeting in one system (not stitching across platforms), you can run this experiment this month.

Start running funnel experiments in Surface — no engineering team required.